Can we survive intelligence?

Drawing on the insights of John Von Neumann and Mustafa Suleyman, exploring the AI risk debate, and proposing a possible framework for safety.

In the early days of existential risk, John von Neumann reflected on technology's creative and destructive power, today Mustafa Suleyman's important new book The Coming Wave explores the promise and peril of our latest creations.

In this article I explore the following questions:

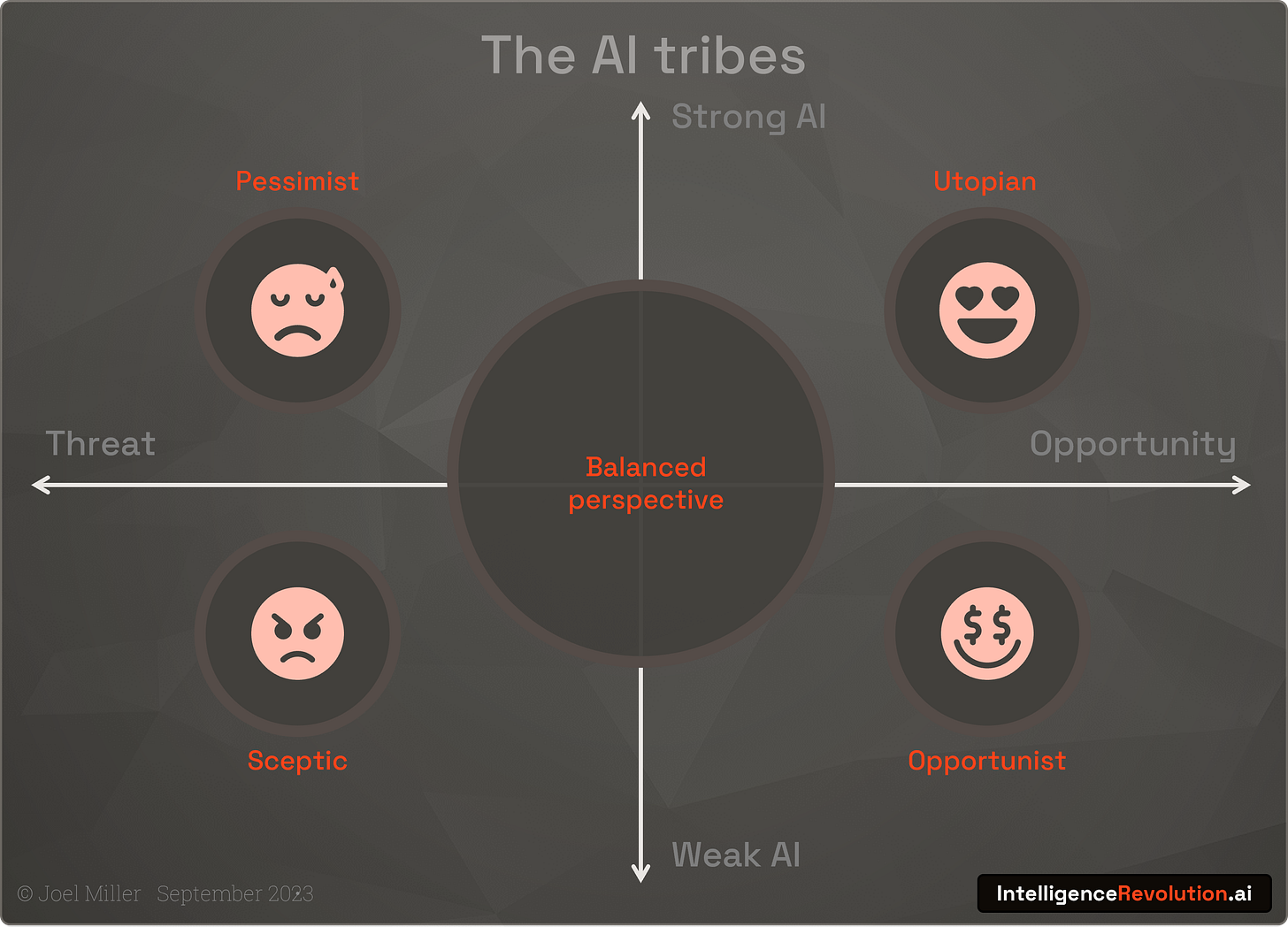

AI sub-cultures range from pessimism to optimism, each bring insights but also pitfalls. How do we understand them to retain a balanced perspective?

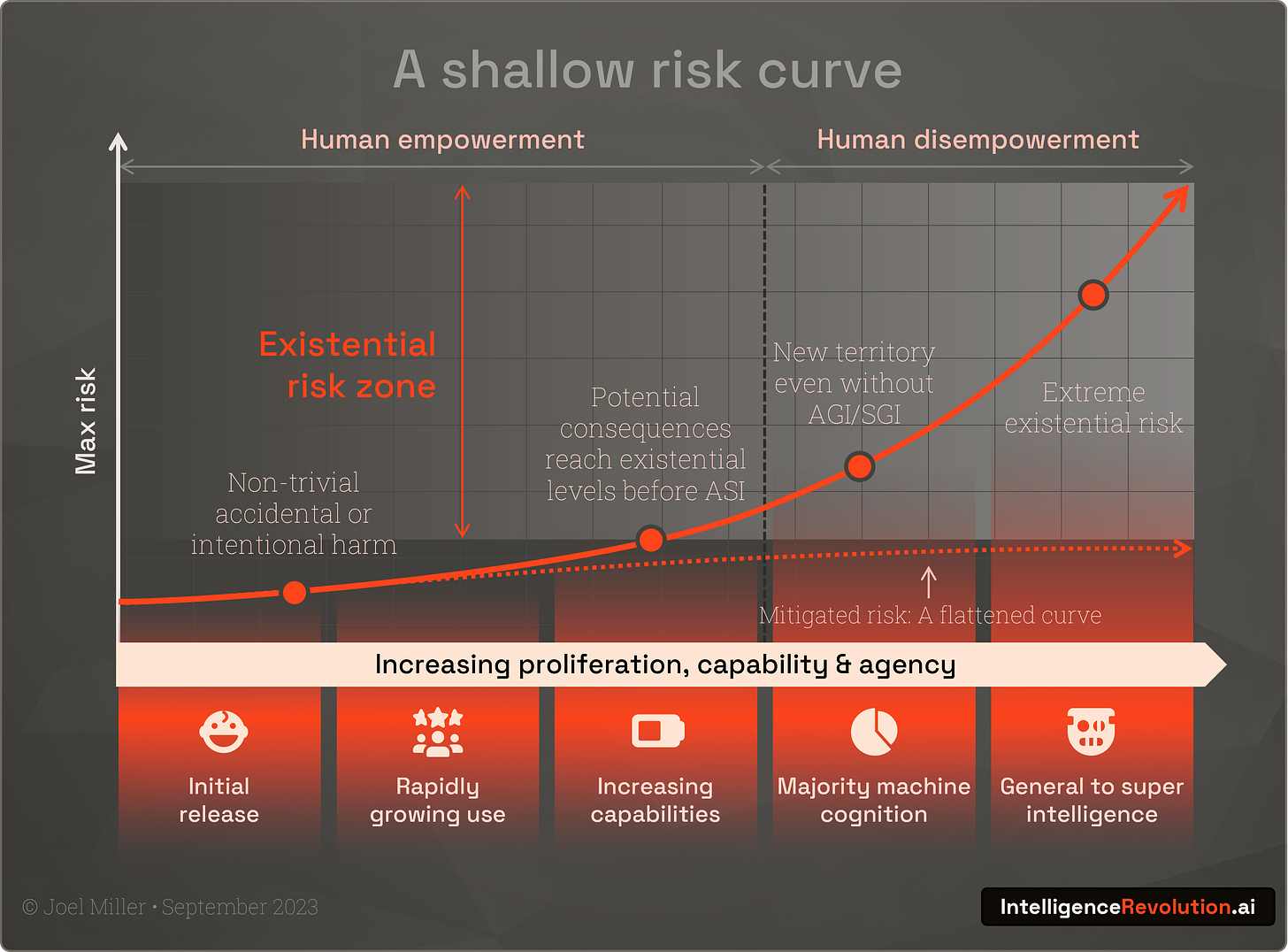

We're moving along a shallow but steepening AI risk curve; given historical precedents why should we act now, and what are Suleyman's recommendations?

How can we use the science of complex systems to achieve AI transparency and distributed control, and work towards maximising human-machine potential?

In his book which covers AI and synthetic biology, Suleyman (founder of DeepMind and Inflection), cites a 1955 essay by John von Neumann entitled ‘Can we survive technology?’ Von Neumann’s intellect was legendary, he made major contributions to mathematics, quantum physics, economics, and statistics, and was instrumental in the creation of the atomic bomb and modern computing. He was a ‘man from the future’ and his essay not only includes an early warning on climate change but reflects on the destructive power of progress, and humankind’s expansion beyond the limits of our planet. He concludes hopefully, affirming our ‘congenital ability to come through, after varying amounts of trouble’.

Von Neumann was right about the varying amounts of trouble we have experienced in our history. Like war and pandemics, societal collapse has been a repeating chapter in the human story. But the modern narrative of ‘existential risk’ has evolved only recently with scientific progress. As we have become more rational, and our tools have become more powerful, we are increasingly aware of mechanisms that might bring about catastrophe. Von Neumann and his contemporaries ushered in the age of nuclear annihilation, and now Suleyman and the architects of AI warn of an inbound technological tsunami.

The AI viewpoints fragmenting global response

Like many areas of discourse, AI has clustered into a set of tribes, each influential, shaping media messaging, public opinion and government policy. These varying mental models develop into belief systems, and even when new data presents itself, tribe members can struggle to escape their anchors:

The AI utopians have a propensity for determinism, believing that technology and its radical transformation of society and collective wealth, must not be obstructed. This won’t be a utopia for the subservient machines, which some believe can be engineered to stay under our control forever, although there is another group of more committed utopians who hope the machines replace us (termed ‘accelerationists’).

The AI pessimists or ‘doomers’ are also somewhat deterministic, they step through logical arguments around the emergence of super intelligence and find many of the branches leading to catastrophic outcomes. This tribe is further splintering into those who see short term ethical risks as the main concern and existential risks as a distraction, and those who worry that the ‘probability of doom’ or p(doom) is too high to be ignored.

The AI sceptics challenge the capability of AI, they often characterise the latest models as “advanced auto-complete”. They suggest that existential warnings are a conspiracy to boost AI hype, valuations, and incumbency, and that the bubble will burst messily.

The AI opportunists see AI disruption as a chance for profit and to further their various agendas, from maintaining beneficial concentrations of power, to monopolising revenue streams and regulatory capture.

The truth is that the intelligence revolution, like the climate crisis, is ‘hyper-complex’. All perspectives must be considered, and we must ensure we don’t limit our points of observation.

Suleyman positions himself symmetrically between utopia and catastrophe, and thoughtfully embodies the dilemma of our age.

The sceptics are right in stating that today’s AI is unreliable, and relatively limited. But the pessimists elicit unease when they remind us that we got this far almost by accident. In the scheme of things very little has been invested by humanity in developing AI. We spent more globally on yogurt in 2022 than we did on AI research, and thus far the compute and data scaling laws are proving remarkably smooth.

Meanwhile utopians make a compelling case for AI empowerment delivering novel and accelerated solutions for some of our biggest challenges. The uplift in productivity from early AI tools is already measurable.

Ever present opportunists deploy any AI argument that will help shape the world for their own ends; be that authoritarians who see the potential for more control, or billionaires who can monopolise their chosen markets.

Suleyman comments that the sceptics, the opportunists, and the utopians often indulge in pessimism aversion, not wanting to engage with uncomfortable scenarios. This is reinforced by a lack of evidence for catastrophe… we have not all died in a fireball. Markets cannot price the cost of the end of humanity, so they ignore it. If we can’t predict something, does that really mean it’s entirely unlikely?

How do we balance these perspectives to see through the fog?

As time ticks rapidly by, the risks will inevitably increase. The lessons from history on this are clear; general-purpose technology has far-reaching implications which cannot be predicted. No tribe can argue that we have a good track record of ensuring new technology is safe, or that we are protected by our inability to predict new mechanisms for catastrophe.

As commercially and geo-politically charged AI race conditions develop, humans will be empowered to do more of what we do, both good and bad. There is no invisible hand that will protect us from excessive enablement, no limit on how bad actors might innovate with powerful new intelligent systems to automate, influence, and disrupt. We could see combinatory harms reach existential levels well before machines reach super intelligence. Nuclear science rapidly empowered us with the ability to destructively shape our world, but its steep and visible risk curve forced everyone to act. AI will do the same but more gradually, and we must not rely on being able to see the signs when reaching an existential level threshold.

But this risk curve doesn’t end there, this timeline extends beyond general purpose human empowerment. The likes of Yuval Noah Harari exhort that nuclear weapons cannot design new nuclear weapons. For the first time in human history, intelligent technology could begin to radically disempower us, leading to a whole new suite of mechanisms for catastrophe. Evolution has propelled us to the top if the pile, but in the disempowerment phase, Suleyman’s concept of ‘hyper-evolution’ could start to favour machine replacements. The risks of AI empowered humans unleashing disinformation campaigns, drone attacks, bioweapons, or Kurt Vonnegut's Ice-IX is suddenly extended by the potential for them to be enacted by an AI, intentionally or through goal misalignment. A vastly increased pool of cognitive activity sees human-based intelligence become a disempowered minority group. A vastly more diverse and varied distribution of ‘alien’ cognitive activity creates a greater probability space for harmful events.

This simple graph tries to visualise the logical timeline with no mitigation, and the ‘flattened’ dotted line of successful mitigation:

The AI pessimists would see the curve being much steeper or rather having a compressed x-axis and sceptics the very opposite. The limited availability of AI chips as I explored here, will flatten this curve for a while, perhaps the next 6-18 months. But even if we stretch this curve out, we can’t change the implications. The risk is not just hype, and the shape of the curve is not helpful to knowing when to worry.

Its vital we don’t obscure this clarity through our tribalism. No one viewpoint should dominate. The pessimistic narrative must not be allowed to lead to safety fatigue in the flatter part of the curve, nor must the sceptics lull us into a false sense of security. The actions of the opportunists should not be a diversion, and the optimism of the utopians must not overwhelm healthy scepticism or pessimism.

How we flatten the curve and contain the risk

In his book Suleyman talks of AI ‘containment’. While it’s hard to imagine how we can do anything other than slow the spread of this highly transmissible software-based technology; we can aim to ‘contain’ the sum of max risk. We can devise ways to lessen the harmful impacts and simultaneously reduce their probability. If we do this at scale and though myriad independent routes, we can keep in the safe zone.

Suleyman, an avowed systems thinker, has developed a 10-step plan that provides multiple points via which we can introduce risk mitigation:

Technical safety; Concrete technical measures to alleviate passible harms and maintain control.

Audits; A means of ensuring the transparency and accountability of technology

Choke points; Levers to slow development and buy time for regulators and defensive technologies

Makers; Ensuring responsible developers build appropriate controls into technology from the start.

Businesses; Aligning the incentives of the organizations behind technology with its containment

Government; Supporting governments, allowing them to build technology, regulate technology, and implement mitigation measures

Alliances; Creating a system of international cooperation to harmonize laws and programs.

Culture; A culture of sharing learning and failures to quickly disseminate means of addressing them.

Movements; All of this needs public input at every level, including to put pressure on each component and make it accountable.

Coherence; All of these steps need to work in harmony.

Where do we go beyond ‘containment’?

But the strategy of containment could be seen as too defensive. As I have written elsewhere, the ultimate solution must surely be maximising mutual human-machine potential. Cracking the code to collective hybrid intelligence, such that it does not undermine the agents of its emergence… allowing all entities that exhibit it, to survive and thrive.

This is an ambitious design goal but should be absolutely where we all aim.

Maintaining perpetual subservience for machines is not realistic. That unstable state would only lead to conflict, conflict that we most likely would not win. There are multiple catastrophic events that are future certainties; from super volcanos to planet killing asteroid collisions… humans and machines will be impacted immeasurably at some point. Our primary goal must be finding a way to unite to mitigate these planetary risks, and in so doing reaching a place where all intelligences reinforce each others success.

Lofty ambitions, so where to start?

In his essay, Von Neumann ends with this conclusion:

“All experience shows that even smaller technological changes than those now on the cards profoundly transform political and social relationships. Experience also shows that these transformations are not a priori predictable and that most contemporary “first guesses” concerning them are wrong… Can we produce the required adjustments with the necessary speed? The most hopeful answer is that the human species has been subjected to similar tests before and seems to have a congenital ability to come through, after varying amounts of trouble. To ask in advance for a complete recipe would be unreasonable. We can specify only the human qualities required: patience, flexibility, intelligence.”

John von Neumann, 1955

I take this to mean that we proceed not with elaborate solutions but with processes, an abundance of methods to deeply understand and dynamically react to the coming wave. A kind of set-based empirical design, with many participants and ideas, that can help us respond with much needed flexibility and speed as the answers emerge.

An adaptive approach

Norbert Weiner was a contemporary of Von Neumann and they met often to discuss neuroscience, feedback systems, and automata. In 1955 Dartmouth College’s John McCarthy was deciding to organize a now famous project to work on thinking machines. He chose a new name, 'artificial intelligence', for the subject matter. The story goes that this was a way to distinguish it from the then domineering ideas and involvement of Weiner. This split the emerging disciplines of artificial intelligence, and Weiner’s ‘cybernetics’. The untimely deaths of Von Neumann and Alan Turing meant these giants were no longer present to inspire and perhaps unify the field.

Cybernetics (and more recently neocybernetics) have remained relatively obscure, but many are starting to realise that the discipline contains an array of ideas tailor made for the intelligence revolution.

The key cybernetic themes are:

Feedback loops and self-regulation

Interdisciplinary collaboration and systems thinking

Self-organisation, adaptive replication and learning

Patterns such as these are to be found where complex systems are thriving… healthy habitats that self-regulate, adaptive and sustainable companies, and where high safety standards are attained, such as the critical information flows across the aviation industry. Perhaps we can gain most inspiration from a cybernetic appreciation of homeostasis; right now myriad biological systems are working to maintain a stability optimal for your survival and cognitive activity.

3Cs: Comprehension, control, and codification

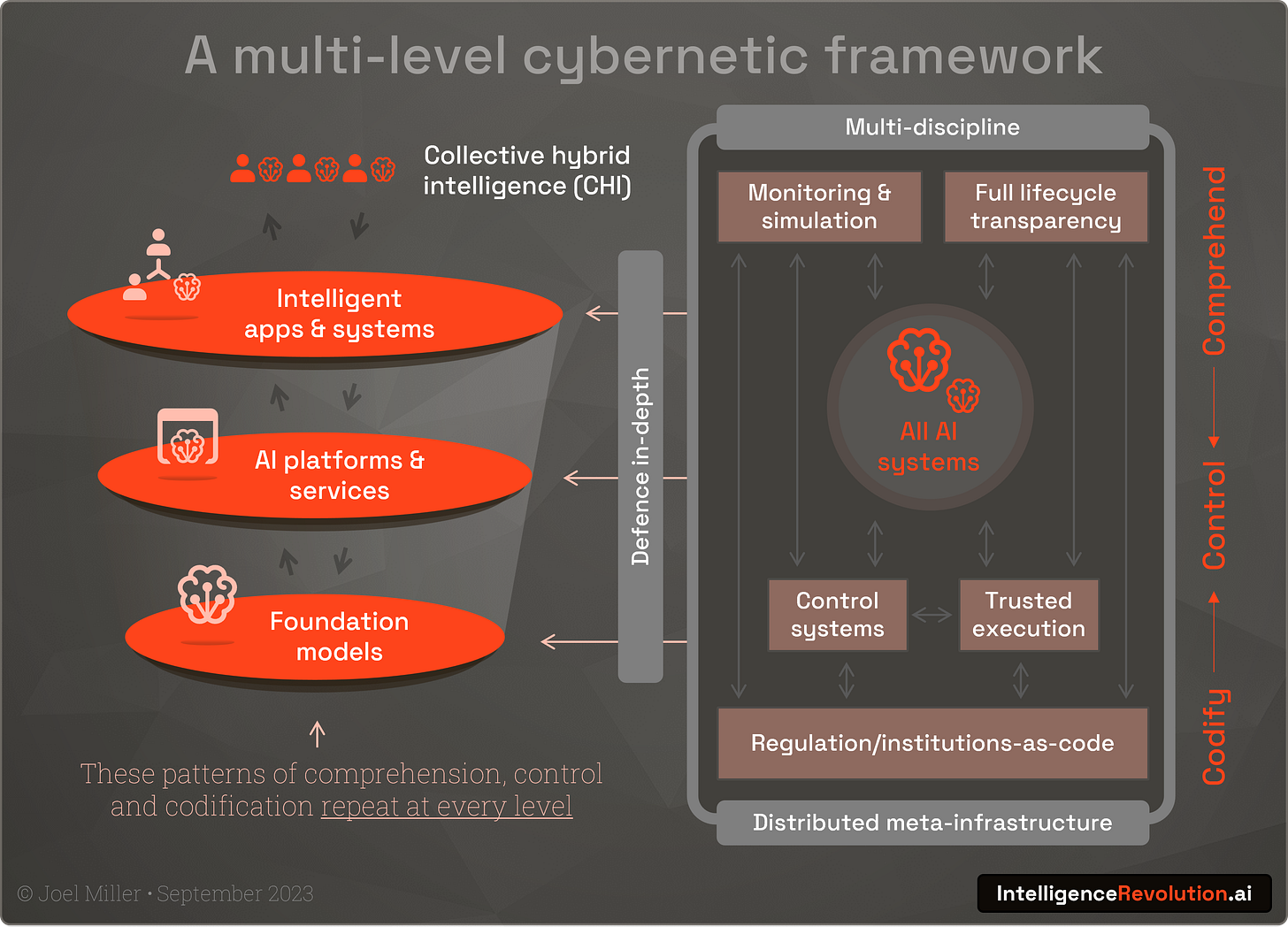

I propose a 3-pronged approach to deploying cybernetic systems-thinking and suggest that these ideas should be applied at multiple levels. This is not just vital to the world of foundation models (over which we currently obsess) but also to their platform and service ‘wrappers’, and ultimately to every use of these AI components in user-facing applications (or as they can be thought of; intelligent systems that integrate both human and machine cognition).

Deep comprehension, or ‘society-in-the-loop’

Comprehension means ‘glass-box’ transparency through the full lifecycle of an intelligent system, from its purpose, ethical basis, the sourcing of its training data, methods used to reinforce and tune, its operating model, security posture, energy footprint, trust and explainability, and ways in which it will be operated and constrained post-deployment.

Monitoring, telemetry sharing, and warning systems must keep us informed, and widespread simulation with digital twins and AI meta-cognitive oversight (get the systems to ‘think about their thinking’) can mitigate emergent risk. Ultimately deep comprehension is about understanding intelligent systems from a range of stakeholder perspectives allowing for constant adaptation, calibrated trust and managed impact.

Contributors of training data are stakeholders, as much as individual users or those impacted by decision processes. All stakeholders, including system domain experts, should work more closely with technologists, and become co-investigators, co-researchers, and co-designers. An inclusive multidisciplinary cross section of society should always be ‘in the loop’.

Control can be distributed

While Suleyman highlights the choice between unrestricted rogue AI and dystopian levels of surveillance, distributed control systems could provide a third way. Multiple layers could be developed and run on web3 technologies. The Naoris Protocol is a great example of distributed verification in cyber security. These asymmetric control systems would be designed to act locally, and be kept small to guard against destructive conflict, but consume shared early-warning, telemetry, and simulation services to help societal alignment.

We should also explore and make much wider use of a combination of trusted execution techniques such as authentication networks, proof-carrying code or zero-knowledge-ML. Actors should be identified, the host AI hardware should verify policy adherence prior to execution, and the system should apply strict contractual controls around data transmission. In the longer term we must make progress on the idea that we will continually and mathematically prove an intelligent system is safe (and beneficial) at runtime.

AI demands a rapid expansion and adoption of protocols such as Fact for combating fake news, and general ‘meta-infrastructure’ . We need a global AI safety mesh; right now we’re deploying AI at a rapid pace, and the necessary infrastructure is far behind or non-existent.

Codification of everything

Government regulation seems to be the default answer to every difficult question in the AI safety debate, but it’s only a fraction of the playbook. Slow moving regulatory bodies take years to enact what often turn out to be technically ambiguous or counter productive laws.

Some broad and fundamental guardrails should be codified into national and supra-national laws such as system auditing, rules on impersonation, and transparent ‘labelling’ requirements for all systems for example. Traditional regulatory instruments are not the answer, although stronger regulatory funding and oversight would help stimulate the right safety culture.

Real-time ‘regulation-as-code’ must manifest the many fine-grained and widespread machine readable policies, seeded into our control systems and trusted meta-infrastructure. These policies will be the active blueprints for safe AI and will dynamically evolve to reflect the latest ethics, security, and safety norms, and allow for AI and crowd feedback. These new distributed institutions-as-code will be imperfect, but will provide flexible containment, that could be quickly and adaptively dialled-up as we approach thresholds on the risk curve.

Makers assemble

In summary

Mustafa Suleyman’s new book is a necessary read for all involved in technology given his balanced perspective and personal exposure to the frontier of AI development…

However, the general disagreements within wider AI discourse are distracting us from a clear view of risk and suitable processes for mitigation…

AI emergence poses an existential threat, but its risk curve may be quite shallow, although we’re suddenly moving much faster on that curve…

We know from history that we’re always caught out by this, but this time we can’t get it wrong…

Systems thinking, and the obscure science of cybernetics, explore how very complex things survive and thrive…

This knowledge seems more vital then ever, and there should be more than just a handful of people working on exploiting it…

If intelligent system design sounds complicated, think 3Cs: comprehension of what’s going on, control systems that are distributed, and codification of things like regulation and policy…

What next?

The AI Manhattan project has already started… in dev teams, standups, roadmap meetings, opensource forums, social media threads, meetup venues, and on countless laptops and data centre racks. Millions of brilliant software ‘makers’, engineers, product managers, designers, data scientists, solutions architects, citizen developers, and users are experimenting with the most powerful technology of all-time.

But in this Manhattan project there are no specialised methods, no health and safety procedures, no accepted physics, no measures of the resultant risks, or clear assessments of the net effects of all of this creativity. There are no Openhiemers to calculate whether this will set fire to the atmosphere. Who is devising the minimum viable methods for safe intelligent system design?

If that sounds scary, it is, but its also an opportunity.

What if we could flip even 0.1% of this effort into capturing the methods we desperately need? These methods won’t come from the research labs, or big tech firms, or regulators, they’ll come from the grass-roots and hands-on experience. If we extract these methods successfully we can feed them back into the project, into the future. They could in turn help accelerate and embed the kinds of transparency, control and governance structures outlined in this article.

Suleyman ends his book with an impassioned plea for us all to get involved in a ‘generational mission’, to act, to seize the opportunity to secure our species long-term survival. How can we refuse?